Why Government Portals Fail (and What Game Theory Can Fix)

Government digitalization keeps failing for the same reason. Not because of bad servers, but because of bad incentives. Here's how mechanism design changes the game.

Every few years, a European government launches a new digital portal. There's a press conference. A minister clicks a button. And then, within months, the system buckles. Appointments get scalped by bots. Citizens book three slots "just in case" and show up to none. Caseworkers drown in incomplete applications that should never have made it past the front door.

The usual response? More servers. Better UI. A redesign. And then the same thing happens again.

I've been thinking about this problem for a while, and I'm convinced the issue isn't technical. It's structural. These systems fail because nobody designed the incentives. They built a portal and hoped people would behave. That's not how humans work.

The Tragedy of the Commons, government edition

In 1968, ecologist Garrett Hardin described a problem he called the Tragedy of the Commons. Imagine a shared pasture. Every farmer has an incentive to add one more cow, because the benefit goes entirely to them while the cost (overgrazing) is shared by everyone. Individually rational. Collectively catastrophic.

Government appointment systems work exactly like this.

Booking a slot costs nothing. No deposit, no reputation at stake, no consequence for not showing up. So people book multiple appointments across different offices, because why not? The benefit (securing a slot) is theirs. The cost (a wasted slot that someone else could have used) is everyone else's problem.

In Berlin, no-show rates for certain government services hit 20-30%. That means a quarter of all appointment capacity evaporates because the system has zero mechanism to align individual behavior with collective outcomes.

This isn't a technology problem. It's a game theory problem.

The Lemon Market in public services

There's another concept from economics that explains why government processing is so slow, even when caseworkers are competent and motivated.

In 1970, George Akerlof described the "Market for Lemons." When buyers can't distinguish good products from bad ones, the market breaks down. Sellers of good products can't prove their quality, so prices drop, and eventually only the bad products remain.

Government services have the same problem, just with applications instead of used cars. A caseworker sitting at their desk has no way of knowing whether the next application in the queue is complete or missing half the required documents. They won't know until they open it, read through it, and discover that the applicant forgot their proof of residence. Forty-five minutes gone. Next one.

The result: qualified, well-prepared applications wait in line behind incomplete ones. The system treats everyone the same, which sounds fair until you realize it punishes the people who actually did the work.

The Scalping Problem

And then there are the bots. In any system where a scarce resource (appointment slots) is distributed for free on a first-come-first-served basis, someone will build a bot to grab them faster than any human can click.

It happens with concert tickets. It happens with sneaker drops. And yes, it happens with government appointments. There are actual black markets where appointment slots at certain German government offices get resold.

A public good, privatized by a script. Not because the system was hacked, but because nobody thought about the incentive structure.

Mechanism Design: changing the rules, not the servers

This is where mechanism design comes in. It's a branch of game theory, sometimes called "reverse game theory." Instead of analyzing a game and predicting what players will do, you design the game so that players naturally do what's best for everyone.

The idea won Leonid Hurwicz, Eric Maskin, and Roger Myerson the Nobel Prize in Economics in 2007. It's used in spectrum auctions, kidney exchanges, and school assignment algorithms. But almost nobody has applied it to government services.

In AEGIS, the mechanism engine is one of the core services. Not because it's technically complex (it isn't, compared to the identity layer), but because it solves the hardest problem: getting humans to cooperate without forcing them.

Here's how.

Identity Staking: your reputation is your deposit

Every interaction through AEGIS is backed by a verified digital identity (the EU Digital Identity Wallet under eIDAS 2.0). You can't create fake accounts. You can't use bots, because bots don't have a European digital identity.

When you book an appointment slot, you "stake" your identity. One person, one active slot per matter. If you don't show up without a valid reason, your trust score drops. Not as punishment, but as a signal: this person tends to waste capacity.

Citizens with a track record of showing up and submitting complete applications get prioritized. Not because they paid more. Because they earned it through cooperative behavior.

No one is ever denied service. A low trust score means you wait a bit longer, and the system proactively offers you help to get your application right. It's coaching, not punishment.

The mechanism kills three problems at once: no-shows drop because there's a reputational cost. Bots are eliminated because they can't get an eID. And scalping becomes impossible because slots are tied to verified identities, not browser sessions.

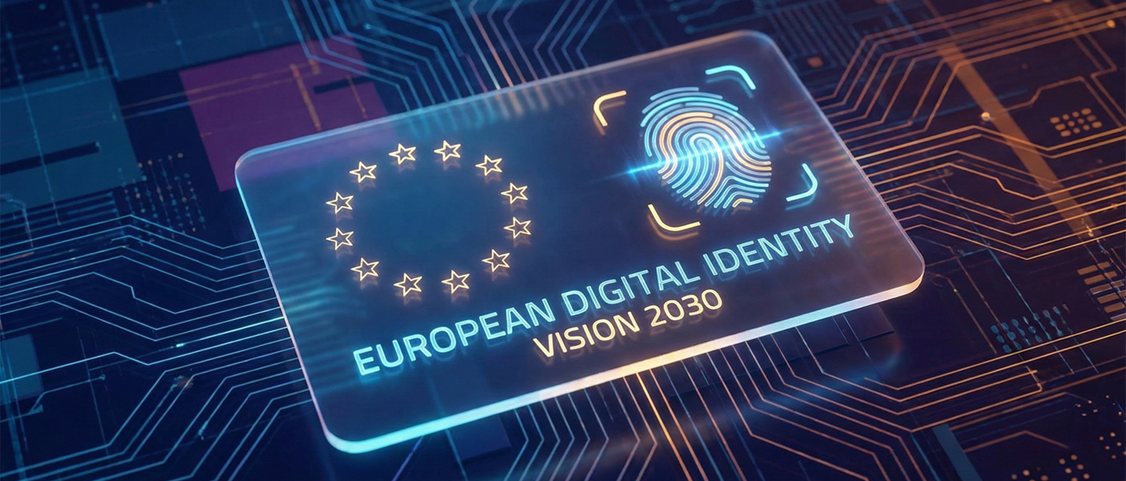

Signaling: prove your application is ready

Michael Spence won the Nobel Prize in 2001 for his work on signaling theory. The core idea: in situations where one side has more information than the other, the informed side can send credible signals to bridge the gap.

Applied to government services: a citizen knows whether their application is complete. The caseworker doesn't. So we let the citizen prove it.

AEGIS pre-validates applications with AI before they enter the queue. Documents are checked for completeness, dates are verified for consistency, formats are validated against requirements. If everything checks out, the application gets a "pre-validated" flag, and the citizen enters the fast track.

The citizen is incentivized to get their documents right, because it directly benefits them (faster processing). The caseworker benefits because they receive clean, structured packages instead of guessing whether the next file is complete or a waste of time.

This is signaling in action: the citizen sends a credible signal ("my application is ready") backed by verifiable evidence (the pre-validation result). The information asymmetry dissolves.

Dynamic Load Balancing: flexibility as currency

Not every interaction needs to happen in real time. Some citizens need an answer today. Others just need it this week.

AEGIS offers a trade: if you're flexible on timing (your application gets processed asynchronously within a defined window), you're guaranteed completion without a physical appointment. If you need it now, you join the live queue.

This is a form of non-monetary pricing. Instead of charging money (which would discriminate based on income), we let citizens "pay" with flexibility. Those who can wait reduce the load on peak hours. Those who can't still get served, just through the synchronous channel.

The result is what operations research calls "peak shaving." The same capacity serves more people because the demand curve gets smoother. No additional servers required.

The Nash Equilibrium we actually want

In game theory, a Nash Equilibrium is a state where no player can improve their outcome by changing their strategy alone. The problem with most government systems is that they settle into a bad equilibrium: everyone spams, everyone waits, everyone loses.

AEGIS is designed to shift the equilibrium. When cooperative behavior (showing up, submitting complete applications, being flexible on timing) consistently produces better outcomes for the individual, it becomes the dominant strategy. Not because we force it, but because the rules make it the obvious choice.

The numbers from our modeling:

| Metric | Status Quo | With AEGIS |

|---|---|---|

| No-Show Rate | 15-20% | under 1% |

| Application Errors | 30-40% | under 2% |

| Bot Traffic | High | 0% |

| System State | Overload | Stable Equilibrium |

These aren't aspirational targets. They're the mathematical consequence of correctly designed incentives.

Trust through architecture, not enforcement

The most important thing about this approach: nobody is forced to do anything. Citizens aren't penalized for being new to the system. They aren't blocked for making mistakes. They aren't charged money for basic government services.

What changes is the structure of the interaction. The game is designed so that doing the right thing is also the easy thing. That's the core insight of mechanism design, and it's why I believe it belongs at the heart of any serious government digitalization effort.

We're not building a better portal. We're building a better game.

This is the third post in a series about AEGIS. The first, "Bureaucracy Shouldn't Separate Families", explains why this project exists. The second, "Inside AEGIS: A First Look at the Architecture", covers the technical foundation. The next post will dive into the security and trust architecture, and why zero-persistence changes everything.