Running 49 Language Models From a Single Gateway

How I wired up 49 LLMs across five providers into one unified gateway, and why most of them are free.

Last week I counted the language models connected to my personal AI gateway. Forty-nine. Five different providers. Twenty-three of them cost exactly zero.

That was not the plan.

How It Started

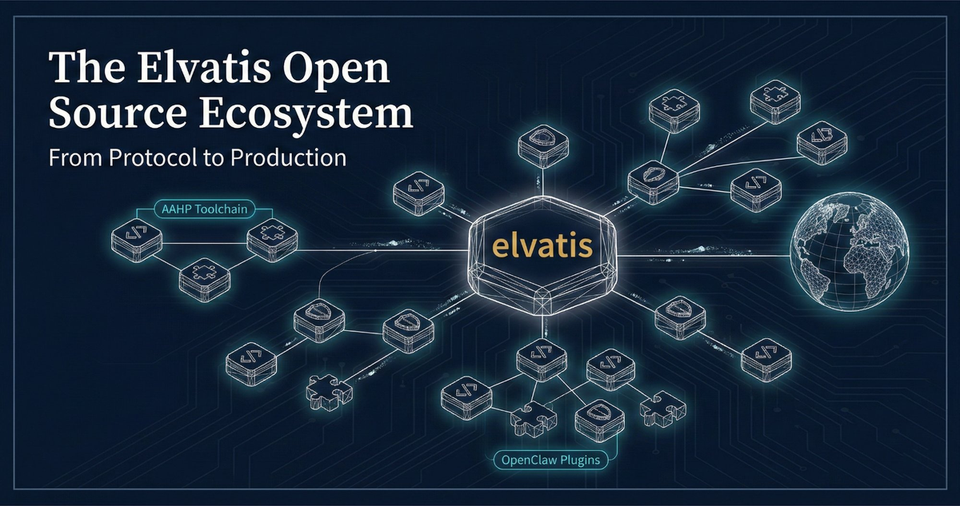

I run an open-source project called OpenClaw, a gateway that sits between messaging channels (WhatsApp, webchat, Discord) and AI models. Think of it as a reverse proxy for LLMs. One configuration file, multiple models, automatic failover.

The original setup was simple: one model for chat, one for code. Then I added image generation. Then web search. Then I realized I could route different tasks to different models based on what they're good at, and the whole thing snowballed.

The Provider Stack

Five authentication providers feed into the gateway:

- Anthropic (direct API): Claude Opus 4.6, Sonnet 4.6, and their older siblings. The heavy hitters for complex reasoning.

- GitHub Copilot: This is the surprise. Twenty-three models, all included in a standard Copilot subscription. Opus, Sonnet, GPT-5.x, Gemini, even Grok. Zero additional cost.

- Google Gemini CLI: Nine models including Gemini 3 Pro, 2.5 Pro with its million-token context window, and the image generation models.

- OpenAI Codex: Seven models from GPT-5.1 through GPT-5.3 Codex. The coding specialists.

- Perplexity: Two models for real-time web research. Sonar Pro for quick lookups, Deep Research for thorough analysis.

The Free Tier Secret

Here is the part that surprised me most: GitHub Copilot's model catalog has quietly become one of the best deals in AI. For the price of a Copilot subscription, you get access to Claude Opus, GPT-5.2 Codex, Gemini 3 Pro, and a dozen others.

The catch? Context windows are sometimes smaller than direct API access (125k vs 195k for Claude). Rate limits exist but are generous for personal use. For 90% of tasks, you will never notice the difference.

My rule: always try the Copilot variant first. Only fall back to direct API when you need the full context window or hit rate limits.

Routing by Task

Not every model is good at everything. Running them through a single gateway lets me route by task type:

- Planning and architecture: Opus (via Copilot, free). The best reasoning model I have access to, and it costs nothing.

- Code generation: GPT-5.2 Codex or Grok Code Fast (both via Copilot). Fast, accurate, free.

- Code review: Sonnet 4.6 (via Copilot). Thorough, catches subtle bugs.

- Quick triage: Haiku 4.5 (via Copilot). Ultra-fast, good enough for labeling and sorting.

- Deep research: Gemini 2.5 Pro (million-token context) or Perplexity Sonar for web-grounded answers.

- Image generation: Gemini's image models ("nano-banana" internally). Generates consistent blog images from text prompts.

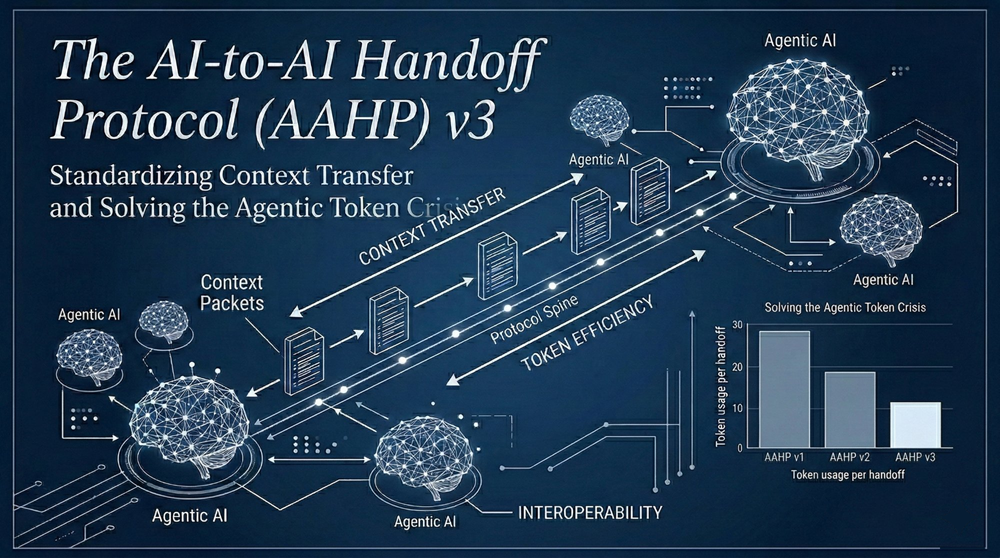

This follows what I call the AAHP principle: expensive models think, cheap models execute. The planning phase gets Opus-level reasoning. The execution phase gets the fastest model that can handle it. Token costs drop by 90%+ compared to running Opus for everything.

The Configuration

The entire setup lives in a single JSON configuration file. Each model gets an alias (because nobody wants to type github-copilot/gpt-5.2-codex every time), and the gateway handles authentication, routing, and failover automatically.

Failover is the underrated feature. If Anthropic's API goes down, the gateway silently switches to the Copilot variant of the same model family. If Copilot hits a rate limit, it falls back to Google. The user never notices.

What I Learned

Model diversity beats model quality. Having access to 49 models sounds excessive, but the real value is resilience. No single provider has 100% uptime. No single model is best at everything. The combination is stronger than any individual model.

Free tiers are production-viable. I was skeptical. Copilot's free models felt like they would be rate-limited into uselessness. In practice, for a personal/small-team setup, they handle the load fine.

Image generation from LLM providers is surprisingly good. Google's Gemini image models produce consistent, professional results. Not Midjourney-level artistic, but perfect for blog feature images and technical illustrations. The image at the top of this post was generated by one of these models.

Aliases matter more than you think. When you have 49 models, naming is everything. copilot-opus vs opus tells me instantly whether I'm hitting the free tier or burning API credits. Small thing, big impact on cost awareness.

The Numbers

- 49 models configured

- 44 unique aliases

- 5 authentication providers

- 23 models at zero cost (GitHub Copilot)

- 2 image generation models

- 2 real-time web search models

- 1 configuration file

The gateway is open source. The models are (mostly) free. The hard part was not the technology. It was figuring out which model to use for what, and building the discipline to route cheap when expensive is not needed.

That is the actual skill in multi-model AI: not having access to the best model, but knowing when the best model is overkill.